Anthropic Announces Major Update: Advisor Strategy for Claude

Late at night, Anthropic officially announced a significant update: Claude’s Advisor Strategy is now live.

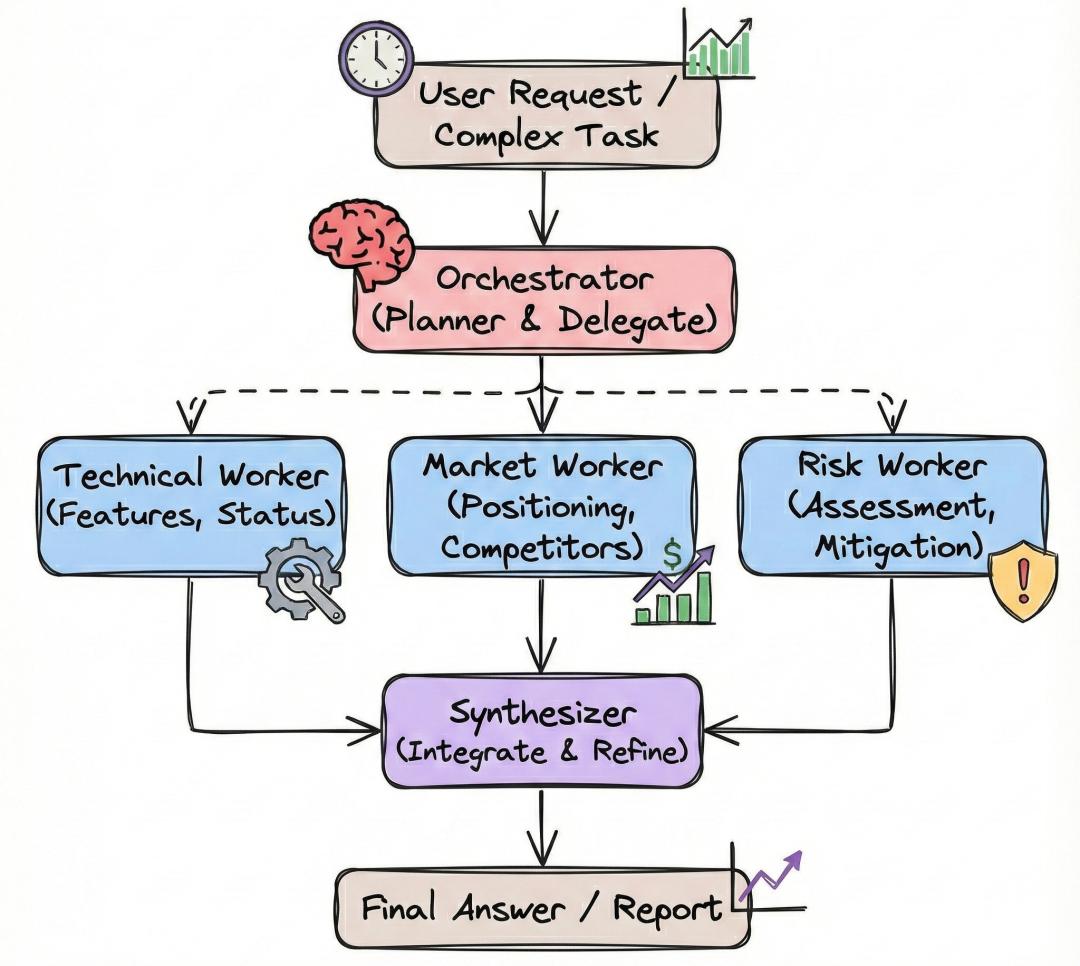

The core logic of this architecture is to have the smartest Opus 4.6 act as a “consultant” behind the scenes, while the cost-effective Sonnet 4.6 or Haiku 4.5 take the lead as “executors”.

In simple terms, Opus acts as the “brain”, while Sonnet/Haiku serve as the “hands”.

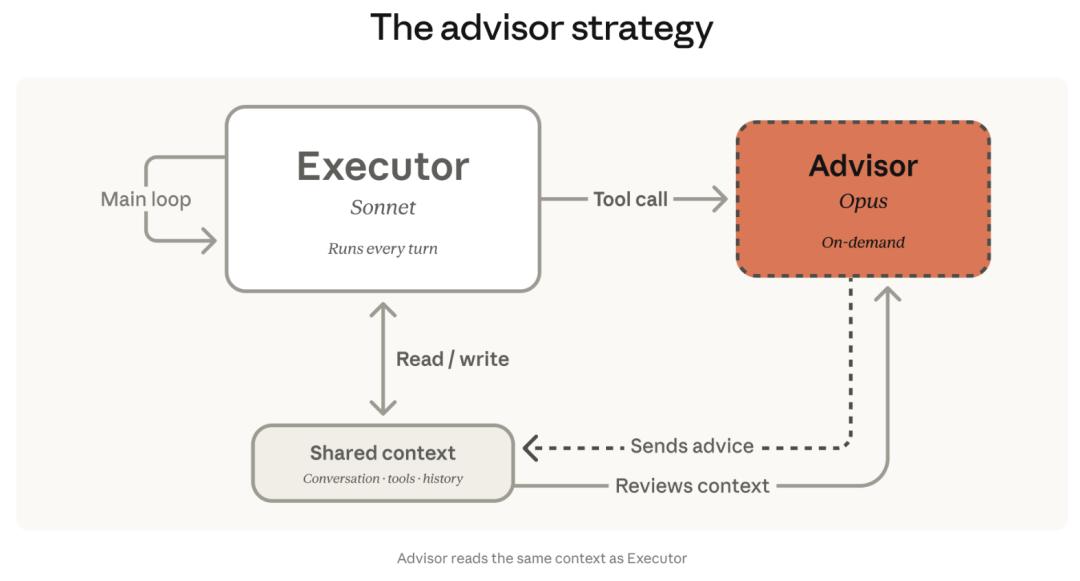

In this new workflow, the core responsibility lies with Sonnet/Haiku, which fully manages the entire process.

When faced with truly challenging problems or when reasonable decisions cannot be made, the executors will call upon Opus as a “consultant” via API for guidance.

Opus will quickly review the context, provide a clever strategy or correction plan, and then the executors will continue to complete the remaining tasks.

This strategy reverses the traditional model of “large models breaking down tasks, small models doing the grunt work”.

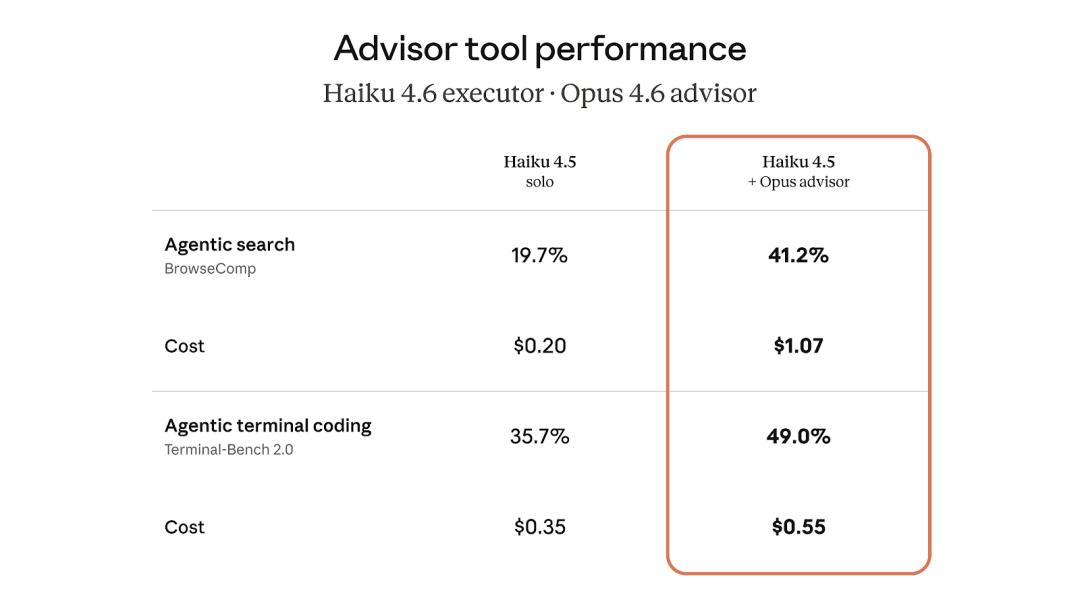

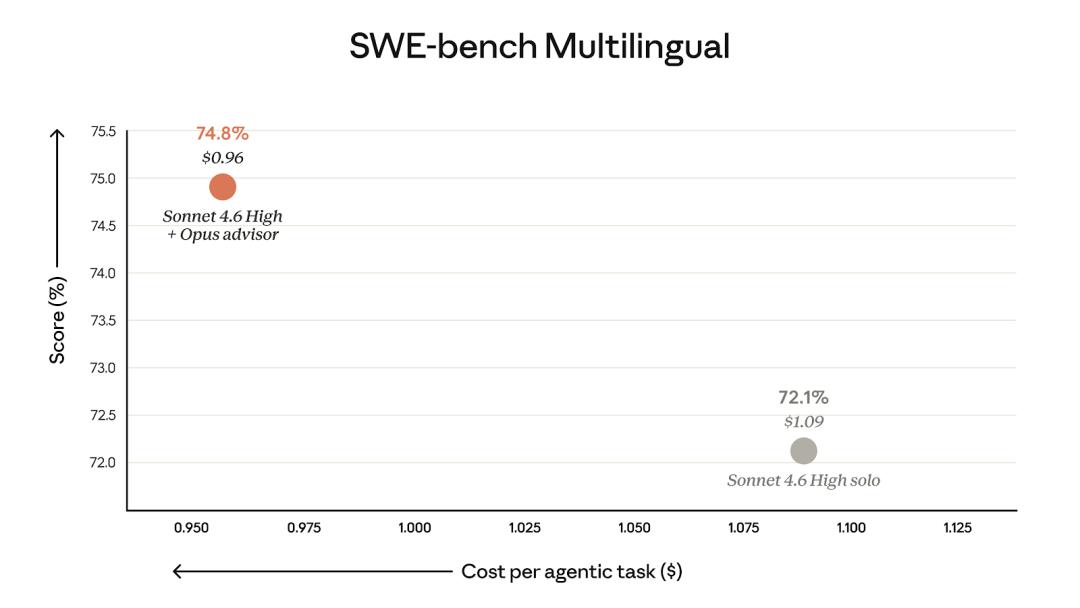

The results from testing are astonishing. In the SWE-bench programming tests, the combination of “Sonnet 4.6 + Opus 4.6” achieved a 2.7% score increase while reducing costs by 11.9%! Even more remarkably, the performance of “Haiku 4.5 + Opus 4.6” doubled, with costs ($1.07) being only a fraction of Sonnet’s ($7).

As one user put it, “enjoy the performance of Opus without paying for Opus”.

Many have been spreading the word that Claude has undergone a significant evolution, giving rise to a better version of OpenClaw.

This update is not just an API enhancement; it represents a complete efficiency revolution.

Claude Now Has a “Consultant”: The Powerful Opus 4.6 Guides from Behind

Developers have long faced a dilemma when building AI agents: using a top-tier model that is smart but expensive, or opting for a lightweight model that is cheaper but may struggle with complex tasks.

The traditional approach involves having the most powerful LLM at the center as the “orchestrator”, breaking down large tasks into smaller sub-tasks and distributing them to smaller, faster models for execution.

This is akin to a project manager (the large model) holding a meeting and assigning different tasks to team members (the small models). The limitation is that regardless of whether the task is simple or complex, the top model must first intervene to break down the task. Each request begins by consuming the most expensive tokens.

Anthropic has adopted a counterintuitive tactic that completely reverses the “large managing small” logic.

The Advisor Strategy employs a more flexible upward tracing mechanism:

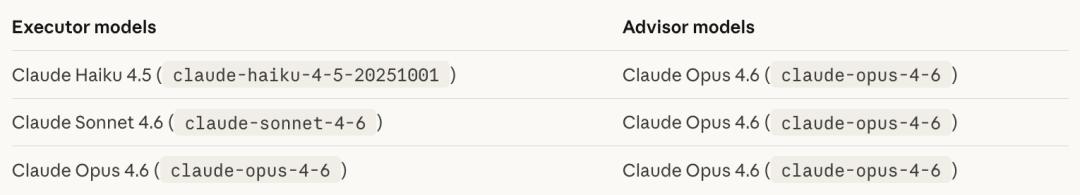

- Executors: Sonnet 4.6 or Haiku 4.5, responsible for end-to-end task execution, tool invocation, result reading, and continuous iteration.

- Consultant: The top model Opus 4.6, lurking in the background, does not directly interact with users or invoke tools.

Only when the executors encounter problems that they cannot independently resolve do they consult the advisor.

Opus reads the shared context, provides plans, correction strategies, or stop signals, and then the executors continue working with these “emergency strategies”.

This strategy precisely applies cutting-edge reasoning capabilities where they are most needed.

In the SWE-bench tests, the “Sonnet + Opus Consultant” combination improved scores by 2.7% while reducing the cost of single-agent tasks by 11.9%.

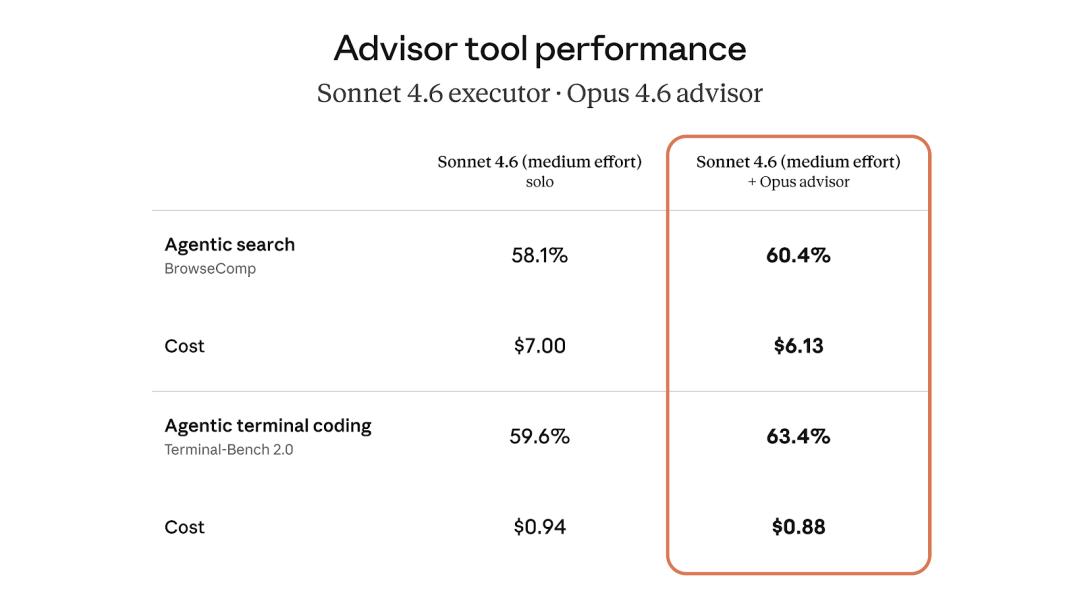

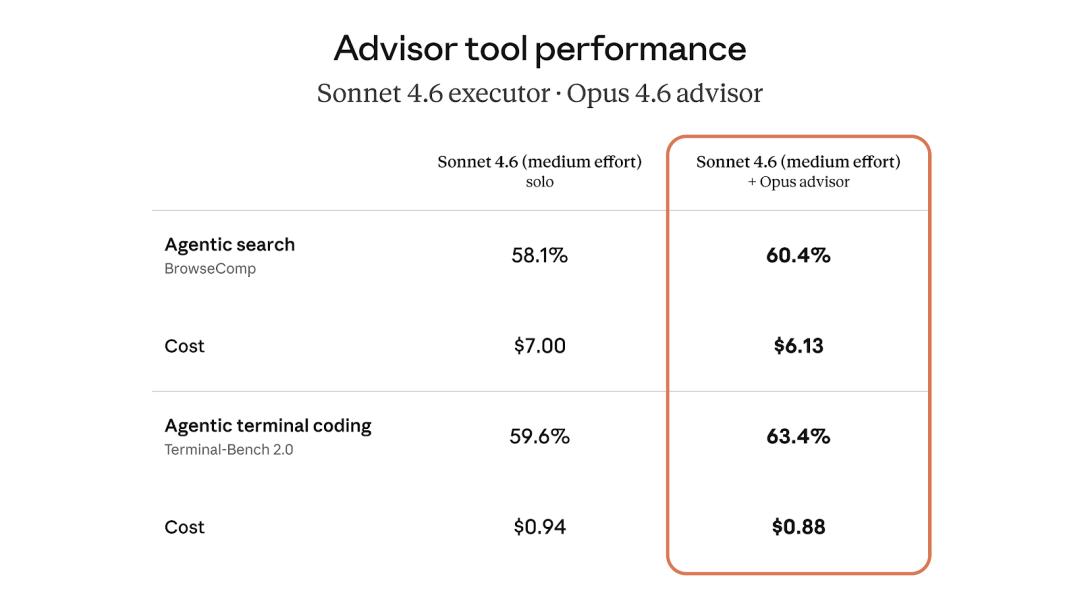

In the agent benchmark tests:

- For the agent search task (BrowseComp), performance increased by 2.3%, costing $6.13.

- For the terminal coding task (Terminal-Bench 2.0), performance increased by 3.8%, costing $0.88.

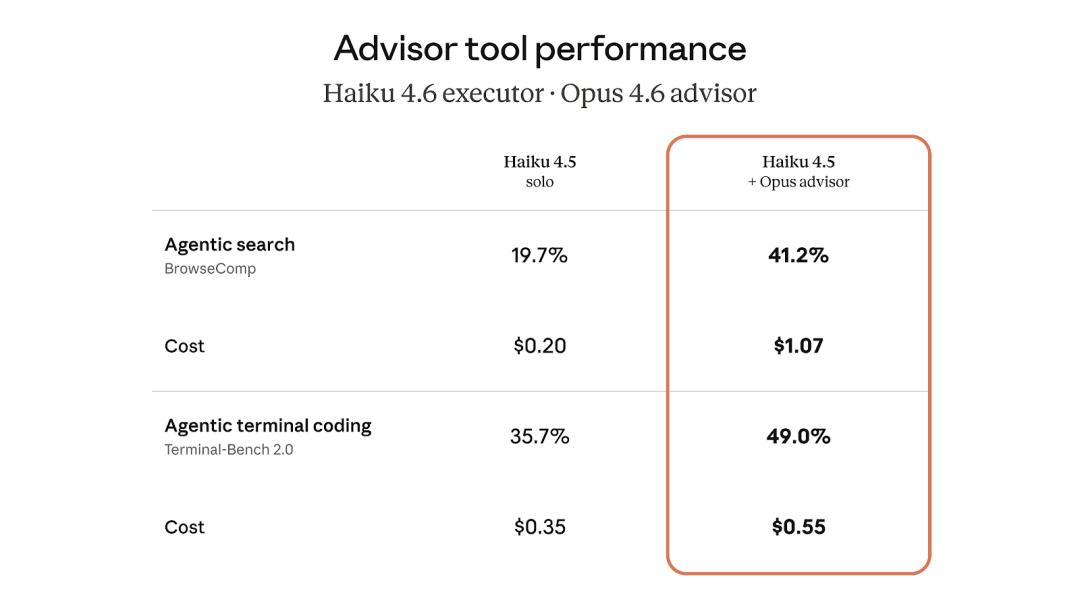

For budget-sensitive scenarios, the combination of “Haiku 4.5 + Opus 4.6 Consultant” performed remarkably well. In the BrowseComp test, its score skyrocketed from 19.7% to 41.2%, effectively doubling performance.

Although this score is 29% lower than Sonnet’s standalone performance, its cost was reduced by 85%, making it an excellent solution for handling high-concurrency tasks.

In Terminal-Bench 2.0, performance surged by 13.3%, with costs decreasing by $0.2.

For large-scale batch tasks that require a certain level of intelligence while controlling costs, Haiku is undoubtedly an excellent choice.

Anthropic stated plainly on their official blog that this allows AI agents to possess Opus-level intelligence while keeping token expenses close to Sonnet’s level.

This is indeed a very appealing proposition!

One Line of Code to Call

So, how can developers get started with this?

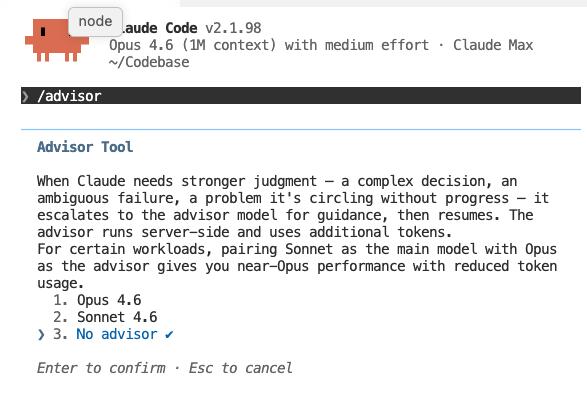

Currently, the “beta version” of the Advisor Strategy is available on the Claude platform. By simply rewriting “one line of code” in the API call, users can utilize the Advisor Strategy. Specifically, in the Messages API request, declaring advisor_20260301 will enable the model handover to occur silently within a single /v1/messages request—no need to return additional data or manage context.

The executor model will decide when to call the consultant.

When it initiates a call, it will route the organized context to the consultant model, retrieve the plan, and return it, allowing the executor to continue working—all operations are completed seamlessly within the same request.

So how is token consumption calculated?

The tokens consumed by the consultant are priced according to Opus, while the executor’s tokens are priced according to Sonnet or Haiku. The key point is that the consultant generates a brief plan each time, typically around 400 to 700 tokens.

The bulk of the output is handled by the executor at a lower rate.

Overall, the cost is far lower than using Opus from start to finish.

Concerned about the consultant being called too many times? Anthropic has thought of that too.

Developers can set max_uses to limit the maximum number of times the consultant can be called in a single request. Additionally, the token consumption of the consultant will be separately listed in the usage information, making it easy to track the expenses of each model layer.

Moreover, the advisor tool is fully compatible with your existing tool stack. It is just a regular entry in the Messages API request, with no special architectural requirements.

Your agent can search the web, execute code, and consult Opus all within the same loop without interference.

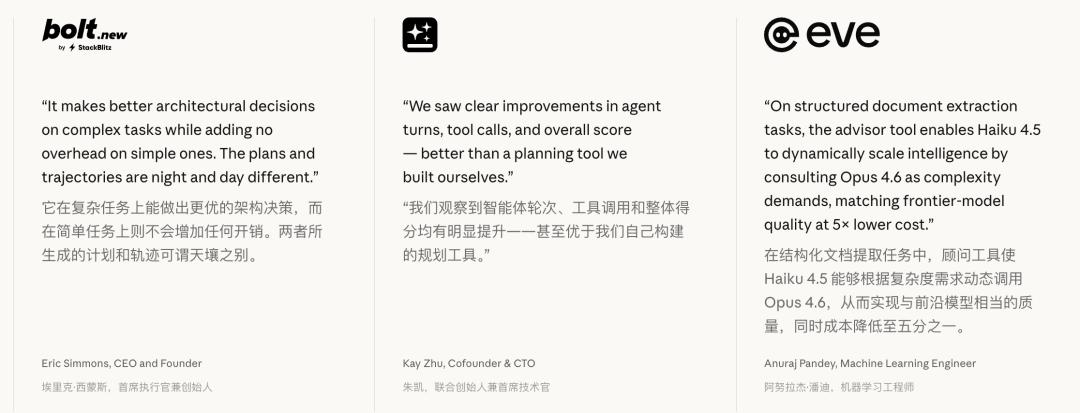

Some major clients who have adopted the Advisor Strategy were instantly amazed, with EVE’s machine learning engineer stating that using Haiku 4.5 + Opus 4.6 reduced costs by 1/5 while achieving near Opus-level intelligence.

Agents No Longer Need to Keep Turning; Background Scripts Will Handle It

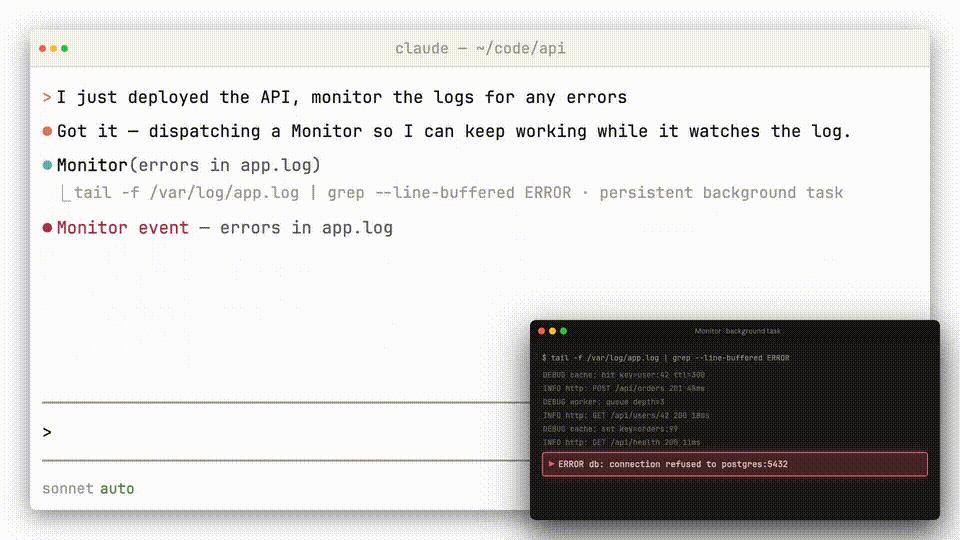

On the same day, Anthropic introduced a heavyweight tool update for Claude called Monitor.

This feature allows Claude to create and run background scripts.

In the past, to monitor a task (like waiting for CI to finish or PR approval), the agent had to continuously loop and ask, burning through tokens with each inquiry.

Monitor allows Claude to write a piece of background monitoring code. If an error occurs, it wakes up. If code compliance checks pass, it wakes up.

This shifts from “active polling” to “event-driven”.

With Monitor, Claude can do two things:

- Continuously monitor for errors in system logs, only calling the agent when issues arise.

- Automatically track the status of PRs on GitHub, with scripts polling in the background, so the agent itself does not consume tokens.

When using it, you need to specify in the prompt, as shown in the examples provided by Anthropic researchers.

Like the Advisor Strategy, this logic aims to find “money-saving segments” in agent operations and separate them out.

One saves on model invocation costs, while the other saves on idle loop costs.

However, the Advisor Strategy and Monitor are not isolated moves. Coupled with the previously released Managed Agents, where Anthropic handles all agent operations and infrastructure for just $0.08 per hour, the direction becomes clear.

Anthropic is no longer just a company selling model APIs. They are building a complete agent runtime platform, covering everything from model scheduling to task execution to cloud hosting.

You No Longer Need to Manage Agents Yourself

The Advisor Strategy and Monitor optimize how agents operate, while Managed Agents address the more fundamental issue of who manages the infrastructure.

At $0.08 per session hour, with sandbox isolation, automatic disconnection recovery, and sessions capable of running autonomously for hours, Anthropic covers it all.

Managed Agents handle operations, while MCP Connectors manage tool integration.

Anthropic’s Connectors Directory covers tools like Asana, Notion, and Sentry, with standard OAuth for one-click integration.

On the other hand, on April 4, they cut off the API access for OpenClaw subscriptions through Claude, forcing users to either switch backends or pay by usage, effectively doubling costs.

This combination of strategies pushes their ecosystem while cutting off competitors’ supplies.

As one user summarized on HN, “the core is not about eliminating competitors but getting developers accustomed to running agents on Anthropic’s platform.”

From Selling Models to Selling Runtime

The Advisor Strategy manages scheduling, Monitor enhances efficiency, Managed Agents handle infrastructure, and MCP Connectors manage the ecosystem. Together, they form a complete agent platform.

Anthropic is not just selling chatbots; they are offering a solution where you only need to say what you want to do, and they handle the rest.

Moreover, their ambitions may extend beyond software. According to a Reuters report this week, Anthropic is exploring self-developed AI chips, which are still in early stages.

The numbers supporting this ambition show an annual revenue exceeding $30 billion, up from $9 billion at the end of last year. Their enterprise AI revenue has already reached a 50:50 share with OpenAI.

Whether this strategy will succeed depends on developers’ willingness to entrust agent logic to Anthropic’s platform.

Sentry, Notion, and Rakuten have already cast their votes.

Easter Egg

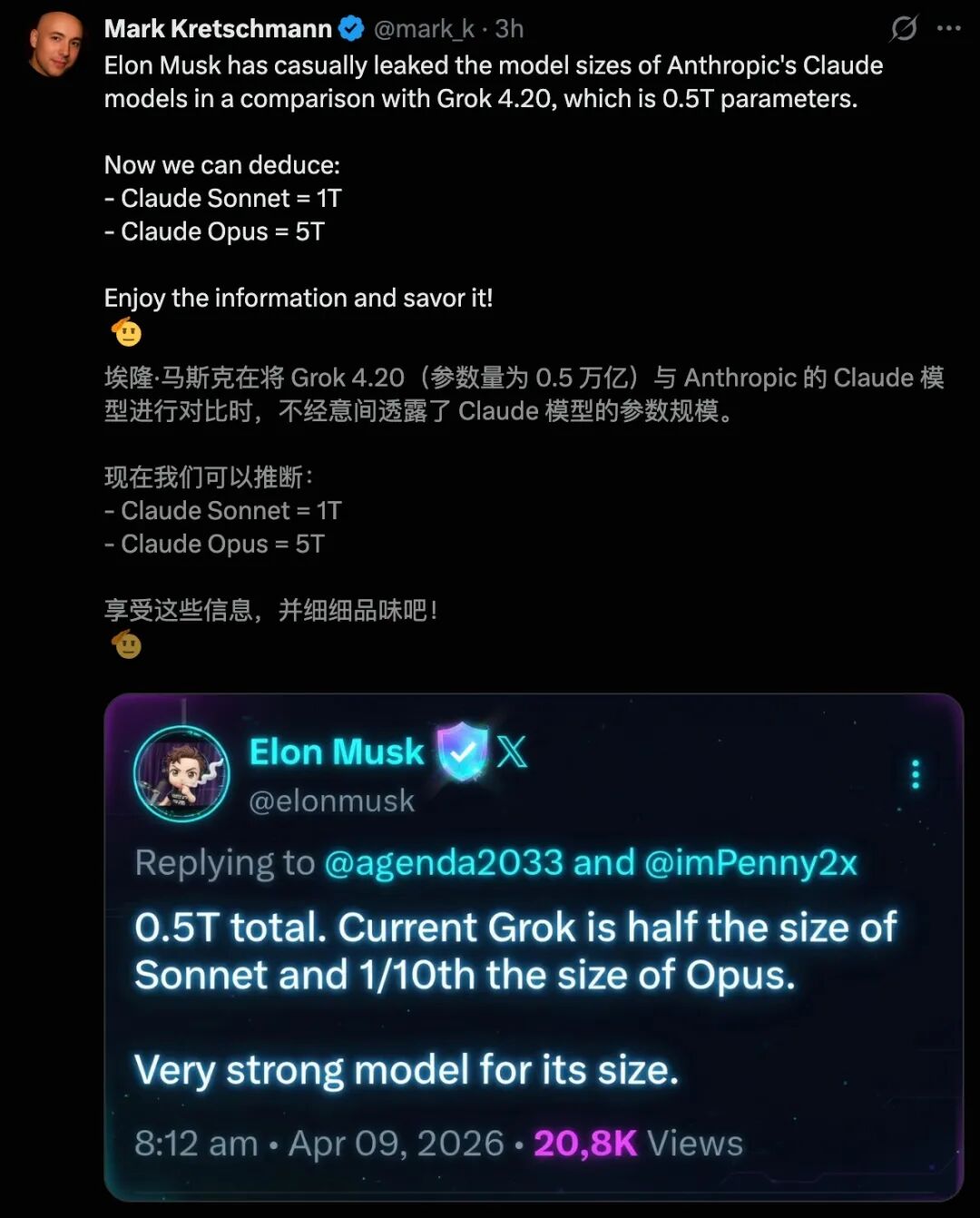

How big is Claude? This is the most sought-after black box in the AI community.

Elon Musk casually revealed a number while comparing his Grok 4.2 with Claude—

Claude Sonnet has approximately 1 trillion parameters, while Opus boasts a staggering 5 trillion.

Some experts speculate that Claude Mythos’s size is at least 10 trillion, or even larger.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.